Paul’s Perspective:

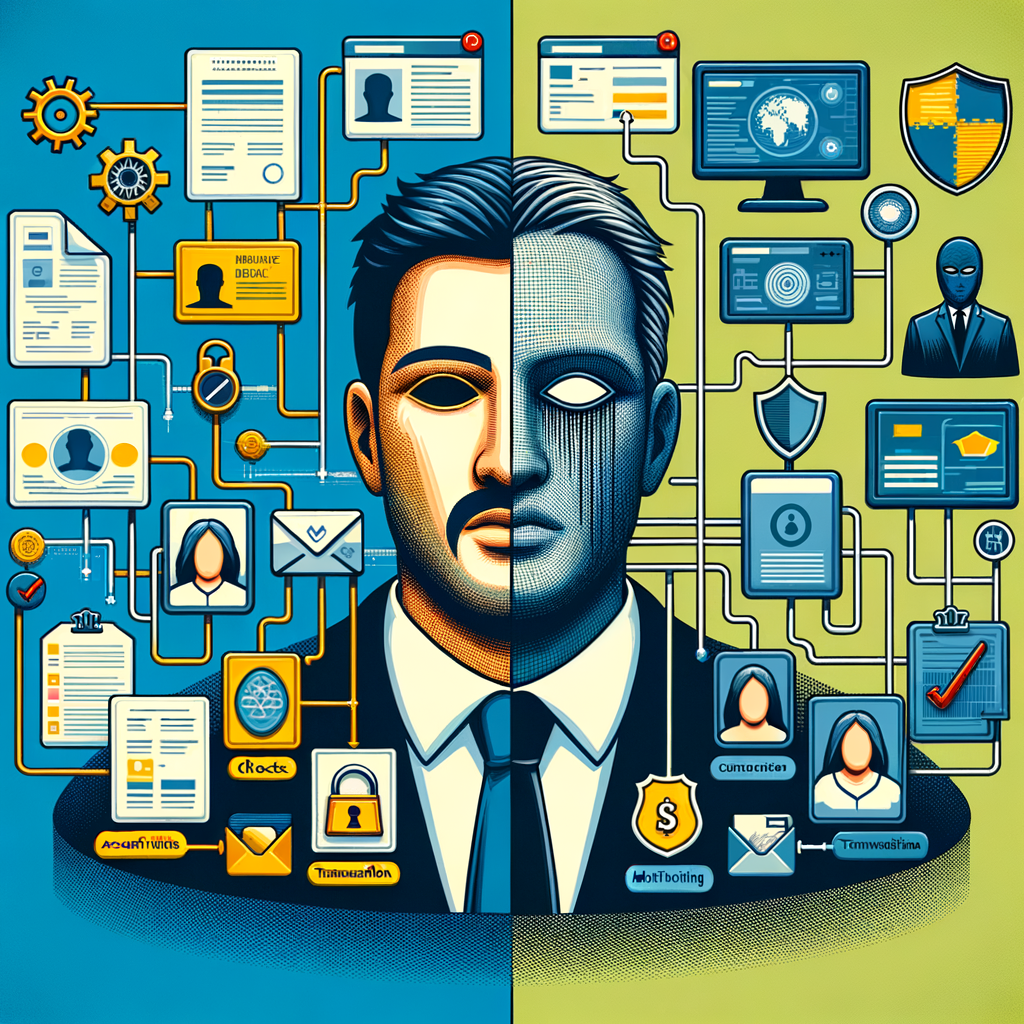

The bigger issue is not just better fakes. It is the erosion of visual evidence as a low-cost shortcut for trust in everyday business workflows.

That forces a leadership decision: keep relying on manual review and image-based proof, or redesign verification around layered controls, stronger process checks, and higher-confidence data sources. Companies that move early can reduce fraud exposure without creating unnecessary friction for customers and staff.

Key Points in Article:

- Generative image systems can now produce realistic IDs, receipts, screenshots, and profile photos in minutes, reducing the technical barrier for fraudsters.

- Processes that rely on a single image, emailed document, or screenshot are more exposed because synthetic content can look credible at first glance.

- Fraud risk shifts from spotting obvious fakes to verifying source, context, and cross-system consistency across identity, payments, and approvals.

- Leaders should expect pressure on compliance, customer onboarding, and support teams as manual review becomes less reliable and more expensive.

Strategic Actions:

- Review where your business accepts images, screenshots, PDFs, or photos as proof of identity, payment, or authorization.

- Classify which workflows depend on visual inspection by staff rather than system-based verification.

- Add layered checks such as account history, metadata review, transaction monitoring, out-of-band confirmation, and approval thresholds.

- Strengthen internal payment and vendor-change controls so one convincing image cannot trigger a transfer or account update.

- Update onboarding, customer service, finance, and compliance teams on how generative images can be used in fraud attempts.

- Test your current controls with synthetic documents and fake profile materials to find weak points.

- Document escalation paths for suspicious submissions and define when manual review must be backed by secondary verification.

Dive deeper > Full Story:

The Bottom Line:

- AI image tools are lowering the cost and skill needed to create convincing fake identities and documents.

- Audit customer verification, payment approval, and internal controls before visual proof becomes a weaker trust signal.

Ready to Explore More?

If you are rethinking verification, approvals, or fraud controls in light of AI-generated content, we can help map the weak points and tighten the process. Reply if you want to talk through practical safeguards that fit your business.