Paul’s Perspective:

For leadership teams, the big takeaway is that LLM capability is no longer a single “model choice” decision—it’s a system design problem. The winners will be the companies that pair the right model with the right post-training, retrieval/tooling, and governance so the output is dependable enough to put in front of customers and into core processes.

Key Points in Video:

- Modern performance gains come as much from post-training (alignment, preference optimization, evaluation) as from scaling model size.

- “Chat” quality is often a product feature built on top of base models (instruction tuning, RLHF/RLAIF, safety layers), not just the foundation model itself.

- Reasoning-focused approaches and tighter evals are increasingly used to reduce hallucinations and improve consistency on business workflows.

- Tool use (search, code execution, retrieval) is a major lever for accuracy—often more impactful than switching model vendors.

Strategic Actions:

- Separate “base model” capability from “chat/alignment” layers (instruction tuning, RLHF/RLAIF, safety policies).

- Decide where post-training matters most: helpfulness, correctness, tone, refusal behavior, or domain expertise.

- Use retrieval and tool integrations (search, databases, calculators, code) to ground answers in your data and reduce hallucinations.

- Adopt clear evaluations: define tasks, metrics, and test sets that reflect real business workflows, then track regressions.

- Put governance in place: data boundaries, logging, human review paths, and escalation for sensitive requests.

- Deploy in stages: start with internal copilots, then limited customer-facing use, then expand as reliability targets are met.

The Bottom Line:

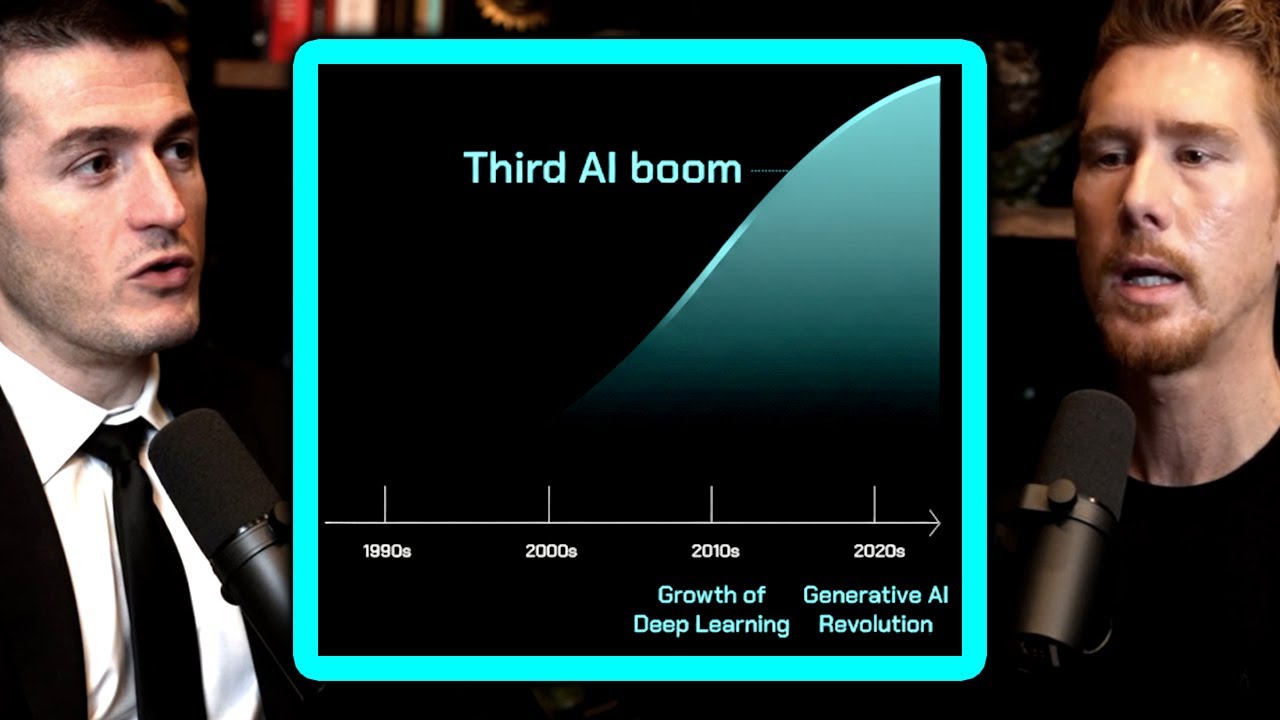

- LLMs have shifted from pattern-matching text generators into instruction-following, tool-using systems through better data, larger models, and post-training methods like RLHF.

- That evolution changes what’s practical for businesses: more reliable customer-facing automation, faster knowledge work, and new expectations for accuracy, safety, and governance.

Dive deeper > Source Video:

Ready to Explore More?

If you’re deciding where LLMs fit in your business, we can help our team map the highest-ROI use cases and design an approach that’s grounded, testable, and safe to deploy. We’ll work with you to connect models to your data and processes so the results hold up in real operations.